用python一天爬取20万条企业信息(源码)

2019-08-14

加入收藏

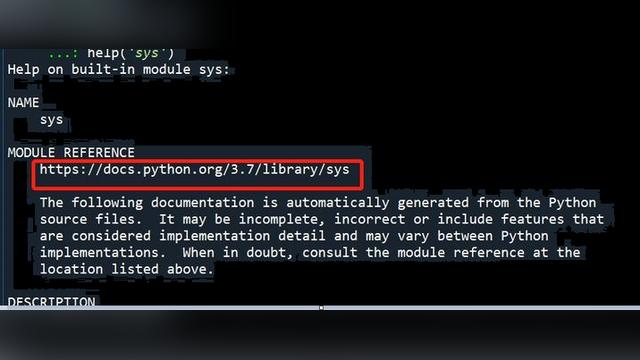

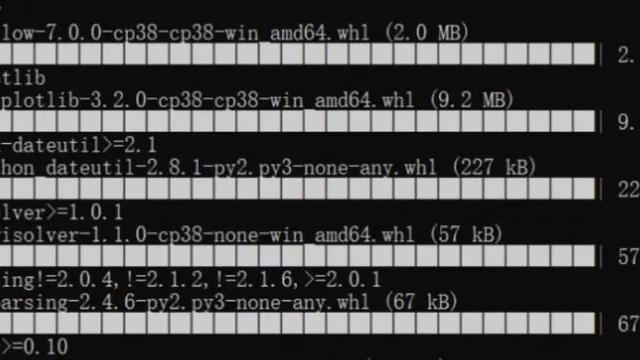

爬虫环境

Python3.7+pycharm

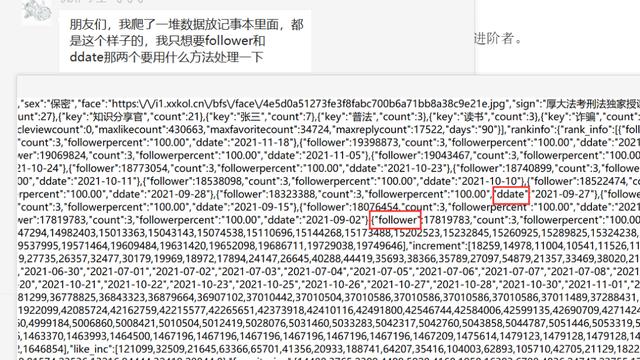

最近发现一个网站,首商网,上面企业信息百万以上,然而网站一点儿反爬机制都没有,这对我们喜欢爬虫的来讲岂不是太爽了,直接拿出撸一套代码,用了三次并发,每次用20条线程,爬了五六个小时,拿下了20万条数据,美滋滋!

还是老规矩,下面直接上代码,所有的注释以及解释都在代码中,可以直接运行:

for k in range(1, 1651, 50):

# -*- coding: utf-8 -*-

# 本项目是原始的异步爬虫,没有封装为函数

import asyncio

import aiohttp

import time

from bs4 import BeautifulSoup

import csv

import requests

from concurrent.futures import ThreadPoolExecutor, wait, ALL_COMPLETED

# 先用并发获取每个页面的子链接

########################################################################################################################

pro = 'zhaoshuang:LINA5201314@ 14.215.44.251:28803'

proxies = {'http://': 'http://' + pro,

'httpS://': 'https://' + pro

}

# 加入请求头

headers = {'User-Agent': 'Mozilla/5.0 (windows NT 10.0; WOW64) AppleWebKit'

'/537.36 (Khtml, like Gecko) Chrome/65.0.3325.181 Safari/537.36'}

wzs = []

def parser(url):

print(url)

try:

response = requests.get(url, headers=headers)

soup1 = BeautifulSoup(response.text, "lxml")

# body > div.list_contain > div.left > div.list_li > ul > li:nth-child(1) > table > tbody > tr > td:nth-child(3) > div.title > a

wz = soup1.select('div.title')

for i in wz:

wzs.append(i.contents[0].get("href"))

time.sleep(1)

except:

print('公司正在审核中')

urls = ['http://www.sooshong.com/c-3p{}'.format(num) for num in range(k, k + 50)]

# 利用并发加速爬取,最大线程为50个,本文章中一共有50个网站,可以加入50个线程

# 建立一个加速器对象,线程数每个网站都不同,太大网站接受不了会造成数据损失

executor = ThreadPoolExecutor(max_workers=10)

# submit()的参数: 第一个为函数, 之后为该函数的传入参数,允许有多个

future_tasks = [executor.submit(parser, url) for url in urls]

# 等待所有的线程完成,才进入后续的执行

wait(future_tasks, return_when=ALL_COMPLETED)

print('子页链接抓取完毕!')

########################################################################################################################

# 使用并发法爬取详细页链接

# 定义函数获取每个网页需要爬取的内容

wzs1 = []

def parser(url):

# 利用正则表达式解析网页

try:

res = requests.get(url, headers=headers)

# 对响应体进行解析

soup = BeautifulSoup(res.text, "lxml")

# 找到页面子链接,进入子页面,对子页面进行抓取

# 用select函数抽取需要的内容,单击需要的内容》检查》copy select

lianjie = soup.select('#main > div.main > div.intro > div.intros > div.text > p > a')

lianjie = lianjie[0].get('href')

wzs1.append(lianjie)

print(lianjie)

except:

print('子页解析失败')

# 利用并发加速爬取,最大线程为50个,本文章中一共有50个网站,可以加入50个线程

# 建立一个加速器对象,线程数每个网站都不同,太大网站接受不了会造成数据损失

executor = ThreadPoolExecutor(max_workers=10)

# submit()的参数: 第一个为函数, 之后为该函数的传入参数,允许有多个

future_tasks = [executor.submit(parser, url) for url in wzs]

# 等待所有的线程完成,才进入后续的执行

wait(future_tasks, return_when=ALL_COMPLETED)

print('详细页链接获取完毕!')

"""

# 使用异步法抓取子页面的链接

########################################################################################################################

async def get_html(sess, ur):

try:

proxy_auth = aiohttp.BasicAuth('zhaoshuang', 'LINA5201314')

html = await sess.get(ur,

headers=headers) # , proxy='http://'+'14.116.200.33:28803', proxy_auth=proxy_auth)

r = await html.text()

return r

except:

print("error")

# f = requests.get('http://211775.sooshong.com', headers=headers)

wzs1 = []

# 解析网页

async def parser(respo):

# 利用正则表达式解析网页

try:

# 对响应体进行解析

soup = BeautifulSoup(respo, "lxml")

# 找到页面子链接,进入子页面,对子页面进行抓取

# 用select函数抽取需要的内容,单击需要的内容》检查》copy select

lianjie = soup.select('#main > div.main > div.intro > div.intros > div.text > p > a')

lianjie = lianjie[0].get('href')

wzs1.append(lianjie)

print(lianjie)

company = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(1) > strong") # 标题

company = company[0].text

# 匹配电话号码

dianhua = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(3)") # 地址

dianhua = dianhua[0].text.split(":")[1]

# 匹配手机号码

phone = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(4)") # 日租价格

phone = phone[0].text.split(":")[1]

# 匹配传真

chuanzhen = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(5)") # 月租价格

chuanzhen = chuanzhen[0].text.split(":")[1]

# 经营模式

jingying = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(8)") # 面积大小

jingying = jingying[0].text.split(":")[1]

# 公司地址

address = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(9)') # 抽取建造年份

address = address[0].text.split(":")[1]

# 公司简介

# introduction = soup.select("#main > div.main > div.intro > div.intros > div.text > p") # 楼层属性

# introduction = introduction[0].text.strip()

data = [company, address, dianhua, phone, chuanzhen, jingying]

print(data)

with open('首富网企业7.csv', 'a+', newline='', encoding='GB2312', errors='ignore') as csvfile:

w1 = csv.writer(csvfile)

w1.writerow(data, [1])

# -*- coding: utf-8 -*-

# 本项目是原始的异步爬虫,没有封装为函数

import asyncio

import aiohttp

import time

from bs4 import BeautifulSoup

import csv

import requests

from concurrent.futures import ThreadPoolExecutor, wait, ALL_COMPLETED

# 先用并发获取每个页面的子链接

########################################################################################################################

pro = 'zhaoshuang:LINA5201314@ 14.215.44.251:28803'

proxies = {'http://': 'http://' + pro,

'httpS://': 'https://' + pro

}

# 加入请求头

headers = {'User-Agent': 'Mozilla/5.0 (windows NT 10.0; WOW64) AppleWebKit'

'/537.36 (Khtml, like Gecko) Chrome/65.0.3325.181 Safari/537.36'}

wzs = []

def parser(url):

print(url)

try:

response = requests.get(url, headers=headers)

soup1 = BeautifulSoup(response.text, "lxml")

# body > div.list_contain > div.left > div.list_li > ul > li:nth-child(1) > table > tbody > tr > td:nth-child(3) > div.title > a

wz = soup1.select('div.title')

for i in wz:

wzs.append(i.contents[0].get("href"))

time.sleep(1)

except:

print('公司正在审核中')

urls = ['http://www.sooshong.com/c-3p{}'.format(num) for num in range(k, k + 50)]

# 利用并发加速爬取,最大线程为50个,本文章中一共有50个网站,可以加入50个线程

# 建立一个加速器对象,线程数每个网站都不同,太大网站接受不了会造成数据损失

executor = ThreadPoolExecutor(max_workers=10)

# submit()的参数: 第一个为函数, 之后为该函数的传入参数,允许有多个

future_tasks = [executor.submit(parser, url) for url in urls]

# 等待所有的线程完成,才进入后续的执行

wait(future_tasks, return_when=ALL_COMPLETED)

print('子页链接抓取完毕!')

########################################################################################################################

# 使用并发法爬取详细页链接

# 定义函数获取每个网页需要爬取的内容

wzs1 = []

def parser(url):

# 利用正则表达式解析网页

try:

res = requests.get(url, headers=headers)

# 对响应体进行解析

soup = BeautifulSoup(res.text, "lxml")

# 找到页面子链接,进入子页面,对子页面进行抓取

# 用select函数抽取需要的内容,单击需要的内容》检查》copy select

lianjie = soup.select('#main > div.main > div.intro > div.intros > div.text > p > a')

lianjie = lianjie[0].get('href')

wzs1.append(lianjie)

print(lianjie)

except:

print('子页解析失败')

# 利用并发加速爬取,最大线程为50个,本文章中一共有50个网站,可以加入50个线程

# 建立一个加速器对象,线程数每个网站都不同,太大网站接受不了会造成数据损失

executor = ThreadPoolExecutor(max_workers=10)

# submit()的参数: 第一个为函数, 之后为该函数的传入参数,允许有多个

future_tasks = [executor.submit(parser, url) for url in wzs]

# 等待所有的线程完成,才进入后续的执行

wait(future_tasks, return_when=ALL_COMPLETED)

print('详细页链接获取完毕!')

"""

# 使用异步法抓取子页面的链接

########################################################################################################################

async def get_html(sess, ur):

try:

proxy_auth = aiohttp.BasicAuth('zhaoshuang', 'LINA5201314')

html = await sess.get(ur,

headers=headers) # , proxy='http://'+'14.116.200.33:28803', proxy_auth=proxy_auth)

r = await html.text()

return r

except:

print("error")

# f = requests.get('http://211775.sooshong.com', headers=headers)

wzs1 = []

# 解析网页

async def parser(respo):

# 利用正则表达式解析网页

try:

# 对响应体进行解析

soup = BeautifulSoup(respo, "lxml")

# 找到页面子链接,进入子页面,对子页面进行抓取

# 用select函数抽取需要的内容,单击需要的内容》检查》copy select

lianjie = soup.select('#main > div.main > div.intro > div.intros > div.text > p > a')

lianjie = lianjie[0].get('href')

wzs1.append(lianjie)

print(lianjie)

company = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(1) > strong") # 标题

company = company[0].text

# 匹配电话号码

dianhua = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(3)") # 地址

dianhua = dianhua[0].text.split(":")[1]

# 匹配手机号码

phone = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(4)") # 日租价格

phone = phone[0].text.split(":")[1]

# 匹配传真

chuanzhen = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(5)") # 月租价格

chuanzhen = chuanzhen[0].text.split(":")[1]

# 经营模式

jingying = soup.select("#main > div.aside > div.info > div.info_c > p:nth-child(8)") # 面积大小

jingying = jingying[0].text.split(":")[1]

# 公司地址

address = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(9)') # 抽取建造年份

address = address[0].text.split(":")[1]

# 公司简介

# introduction = soup.select("#main > div.main > div.intro > div.intros > div.text > p") # 楼层属性

# introduction = introduction[0].text.strip()

data = [company, address, dianhua, phone, chuanzhen, jingying]

print(data)

with open('首富网企业7.csv', 'a+', newline='', encoding='GB2312', errors='ignore') as csvfile:

w1 = csv.writer(csvfile)

w1.writerow(data, [1])

except:

print("出错!")

async def main(loop):

async with aiohttp.ClientSession() as sess:

tasks = []

for ii in wzs:

ur = ii

try:

tasks.append(loop.create_task(get_html(sess, ur)))

except:

print('error')

# 设置0.1的网络延迟增加爬取效率

await asyncio.sleep(0.1)

finished, unfinised = await asyncio.wait(tasks)

for i1 in finished:

await parser(i1.result())

if __name__ == '__main__':

t1 = time.time()

loop = asyncio.get_event_loop()

loop.run_until_complete(main(loop))

print("花费时间", time.time() - t1)

print('详细页链接抓取完毕!')

"""

########################################################################################################################

# 使用并发法获取详细页的内容

########################################################################################################################

# 定义函数获取每个网页需要爬取的内容

def parser(url):

global data

try:

res = requests.get(url, headers=headers)

# 对响应体进行解析

soup = BeautifulSoup(res.text, 'lxml')

# 找到页面子链接,进入子页面,对子页面进行抓取

# 用select函数抽取需要的内容,单击需要的内容》检查》copy select

company = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(1) > strong')

company = company[0].text

name = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(2)')

name = name[0].text

dianhua = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(3)')

dianhua = dianhua[0].text.split(':')[1]

shouji = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(4)')

shouji = shouji[0].text.split(':')[1]

chuanzhen = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(5)')

chuanzhen = chuanzhen[0].text.split(':')[1]

product = soup.select('tr:nth-child(1) > td:nth-child(2)')

product = product[0].text

company_type = soup.select('tr:nth-child(2) > td:nth-child(2) > span')

company_type = company_type[0].text.strip()

legal_person = soup.select('tr:nth-child(3) > td:nth-child(2)')

legal_person = legal_person[0].text

main_address = soup.select('tr:nth-child(5) > td:nth-child(2) > span')

main_address = main_address[0].text

brand = soup.select('tr:nth-child(6) > td:nth-child(2) > span')

brand = brand[0].text

area = soup.select('tr:nth-child(9) > td:nth-child(2) > span')

area = area[0].text

industry = soup.select('tr:nth-child(1) > td:nth-child(4)')

industry = industry[0].text.strip()

address = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(9)')

address = address[0].text.split(':')[1]

jingying = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(8)')

jingying = jingying[0].text.split(':')[1]

date = soup.select('tr:nth-child(5) > td:nth-child(4) > span')

date = date[0].text

wangzhi = soup.select('tr:nth-child(12) > td:nth-child(4) > p > span > a')

wangzhi = wangzhi[0].text

data = [company, date, name, legal_person, shouji, dianhua, chuanzhen, company_type, jingying, industry,

product, wangzhi, area, brand, address, main_address] # 将以上数据放入列表中打印在命令框

print(data)

with open('服装1.csv', 'a', newline='', encoding='GB2312') as csvfile:

w1 = csv.writer(csvfile)

w1.writerow(data)

except:

with open('服装2.csv', 'a', newline='', encoding='utf-8-sig') as csvfile:

w1 = csv.writer(csvfile)

w1.writerow(data)

print('utf解码成功')

# 利用并发加速爬取,最大线程为50个,本文章中一共有50个网站,可以加入50个线程

# 建立一个加速器对象,线程数每个网站都不同,太大网站接受不了会造成数据损失

executor = ThreadPoolExecutor(max_workers=10)

# submit()的参数: 第一个为函数, 之后为该函数的传入参数,允许有多个

future_tasks = [executor.submit(parser, url) for url in wzs1]

# 等待所有的线程完成,才进入后续的执行

wait(future_tasks, return_when=ALL_COMPLETED)

print('全部信息抓取完毕')

print("出错!")

async def main(loop):

async with aiohttp.ClientSession() as sess:

tasks = []

for ii in wzs:

ur = ii

try:

tasks.append(loop.create_task(get_html(sess, ur)))

except:

print('error')

# 设置0.1的网络延迟增加爬取效率

await asyncio.sleep(0.1)

finished, unfinised = await asyncio.wait(tasks)

for i1 in finished:

await parser(i1.result())

if __name__ == '__main__':

t1 = time.time()

loop = asyncio.get_event_loop()

loop.run_until_complete(main(loop))

print("花费时间", time.time() - t1)

print('详细页链接抓取完毕!')

"""

########################################################################################################################

# 使用并发法获取详细页的内容

########################################################################################################################

# 定义函数获取每个网页需要爬取的内容

def parser(url):

global data

try:

res = requests.get(url, headers=headers)

# 对响应体进行解析

soup = BeautifulSoup(res.text, 'lxml')

# 找到页面子链接,进入子页面,对子页面进行抓取

# 用select函数抽取需要的内容,单击需要的内容》检查》copy select

company = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(1) > strong')

company = company[0].text

name = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(2)')

name = name[0].text

dianhua = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(3)')

dianhua = dianhua[0].text.split(':')[1]

shouji = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(4)')

shouji = shouji[0].text.split(':')[1]

chuanzhen = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(5)')

chuanzhen = chuanzhen[0].text.split(':')[1]

product = soup.select('tr:nth-child(1) > td:nth-child(2)')

product = product[0].text

company_type = soup.select('tr:nth-child(2) > td:nth-child(2) > span')

company_type = company_type[0].text.strip()

legal_person = soup.select('tr:nth-child(3) > td:nth-child(2)')

legal_person = legal_person[0].text

main_address = soup.select('tr:nth-child(5) > td:nth-child(2) > span')

main_address = main_address[0].text

brand = soup.select('tr:nth-child(6) > td:nth-child(2) > span')

brand = brand[0].text

area = soup.select('tr:nth-child(9) > td:nth-child(2) > span')

area = area[0].text

industry = soup.select('tr:nth-child(1) > td:nth-child(4)')

industry = industry[0].text.strip()

address = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(9)')

address = address[0].text.split(':')[1]

jingying = soup.select('#main > div.aside > div.info > div.info_c > p:nth-child(8)')

jingying = jingying[0].text.split(':')[1]

date = soup.select('tr:nth-child(5) > td:nth-child(4) > span')

date = date[0].text

wangzhi = soup.select('tr:nth-child(12) > td:nth-child(4) > p > span > a')

wangzhi = wangzhi[0].text

data = [company, date, name, legal_person, shouji, dianhua, chuanzhen, company_type, jingying, industry,

product, wangzhi, area, brand, address, main_address] # 将以上数据放入列表中打印在命令框

print(data)

with open('服装1.csv', 'a', newline='', encoding='GB2312') as csvfile:

w1 = csv.writer(csvfile)

w1.writerow(data)

except:

with open('服装2.csv', 'a', newline='', encoding='utf-8-sig') as csvfile:

w1 = csv.writer(csvfile)

w1.writerow(data)

print('utf解码成功')

# 利用并发加速爬取,最大线程为50个,本文章中一共有50个网站,可以加入50个线程

# 建立一个加速器对象,线程数每个网站都不同,太大网站接受不了会造成数据损失

executor = ThreadPoolExecutor(max_workers=10)

# submit()的参数: 第一个为函数, 之后为该函数的传入参数,允许有多个

future_tasks = [executor.submit(parser, url) for url in wzs1]

# 等待所有的线程完成,才进入后续的执行

wait(future_tasks, return_when=ALL_COMPLETED)

print('全部信息抓取完毕')